Synchronize Files With rsync

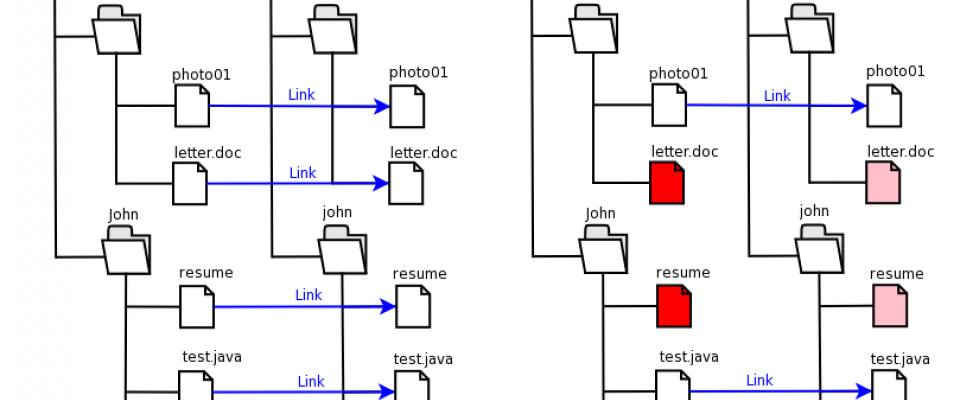

Synchronizing files from one server to another is quite awesome. You can use it for backups, for keeping web servers in sync, and much more. It's fast and it doesn't take up as much bandwidth as normal copying would. And the best thing is, it can be done with only 1 command. Welcome to the wonderful world of rsync.

Installing rsync

On most modern Linux distributions you will find rsync comes preinstalled. If that's not the case, just install it with your package manager. On Ubuntu this would look like:

$ aptitude -y install rsync

done!

Simple - one command

Let's copy our local /home/holyguard/source to /home/holyguard/destination which resides on the server: server.example.com:

$ rsync -az --progress --size-only /home/holyguard/source/* server.example.com:/home/holyguard/destination/

explained:

-

-aarchive, preserves all attributes like recursive ownership, timestamps, etc -

-zcompress, saves bandwidth but is harder on your CPU so use it for slow/expensive connections only -

--progressshows you the progress of all the files that are being synced -

--size-onlycompare files based on their size instead of hashes (less CPU, so faster)

Note that this sync excludes hidden files since it uses the bash *. If you want to include hidden files, write the source like this: /home/holyguard/source/ and remove the trailing slash from the destination like so: /home/holyguard/destination.

Well, that's it! But read on if you want to learn how to automate this.

Advanced - automatic syncing with SSH keys

Alright so syncing files on Linux is pretty easy. But what if we want to automate this? How can we avoid that rsync asks for a password every time?

There are different ways to go about this, but the one I mostly use is installing SSH keys. By installing your SSH key on the destination server, it will recognize you in the future and permit instant access. So this way we can automate the synchronization with rsync.

Open a terminal and type:

$ ssh server.example.com

It should not ask you for any password. Great! this means we can also run rsync directly without logging in!

Let's create a sync script

So now just create a script /root/bin/syncdata.bash

$ $EDITOR /root/bin/syncdata.bash

that contains your rsync command:

#!/usr/bin/env bash rsync -az --delete /home/holyguard/source/* server.example.com:/home/holyguard/destination/

Save the file (CTRL+O) and exit (CTRL+X) and make it executable like this:

$ chmod a+x /root/bin/syncdata.bash

Schedule it to run every hour

And to have your data synchronized every hour, open up your crontab editor:

$ crontab -e

And type

0 * * * * /root/bin/syncdata.bash

That's it! New files are automatically updated @ server.example.com:/home/holyguard/destination/ every hour. Files that are deleted from /home/holyguard/source/* are also deleted at the destination, thanks to the --delete parameter.

Some extra rsync command line options

Some extra arguments that might come in handy customizing your synchronization job:

-

--deletedelete files remotely that no longer exist locally -

--dry-runshow what would have been transferred, but do not transfer anything -

--max-delete=10don't delete more than 10 files in one run, safety precaution -

--delay-updatesput all updated files into place at transfer's end, very useful for live systems -

--compress-level=9explicitly set compression level 9. 0 disabled compression -

--exclude-from=/root/sync_excludespecifies a /root/sync_exclude that contains exclude patterns (one per line). filenames matching these patterns will not be tranfered -

--bwlimit=1024This option specifies a maximum transfer rate of 1024 kilobytes per second.